Chapter 8 Value Function Methods

Chapter 8 Value Function Methods

In this chapter we move from previous tabular representation for state/action value to function representation. That is to say, we use a function to fit the true expression of the state/action value function. Such a function can be predifined, e.g., a linear function, or a neural network.

The reason why we move from tabular-based representation to function-based are:

- storage, compared with tabular, we only need to store a few parameters describing the function.

- generalization, action and state may be infinite (or continuous), we cannot store all the state/action value. We need to find a unified representation.

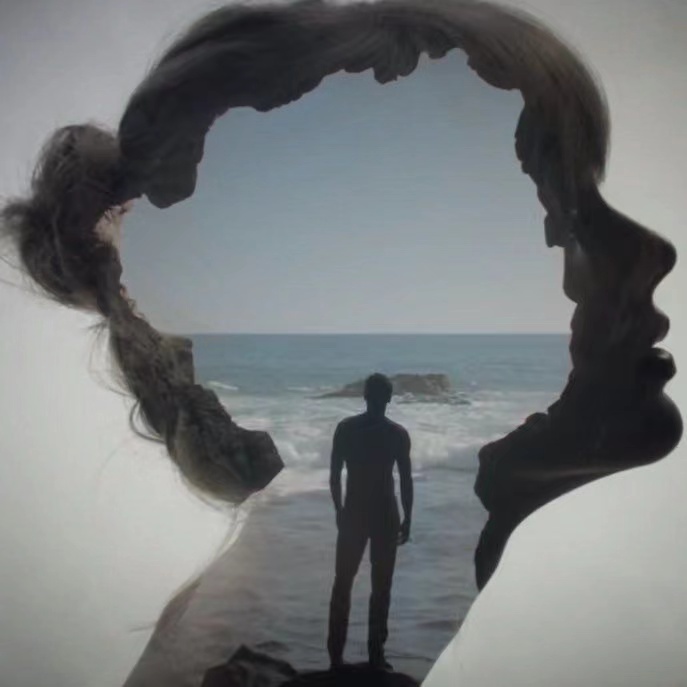

As shown in the figure, we update parameters to update the value.

To emphasize, the difference between TD learning with approximation and the previous one is that the former uses TD learning to estimate the value and then continuously updates the action value based on the RM algorithm. TD learning with approximation, on the other hand, is based on SGD (which is essentially also an RM algorithm), using TD learning to estimate the value and continuously updating the parameter values used to approximate the action value function.

Function-based Value representation

A simple linear function approximation:

here

- is the parameter vector

- is the feature vector of

- is linear in

We can also fit the points using a second-order curve:

In this case,The dimensions of and increase, but the values may be fitted more accurately. Although is nonlinear in , it is linear in . The nonlinearity is contained in .

we can use even higher-order polynomial curves or other complex curves to fit the dots, which we can better approximate true value function but needs more parameter.

TD learning of state values based on function approximation

objective

As we are trying to fit the value function, our optimization objective is:

here we invovle the random variable , if the state is in uniform, we have:

however, we can write it into a more general form:

here is called the the stationary distribution of the Markov process under policy . That is, the probability for the agent visiting after a long period of time is . By definition, .

It is notable that the value of is nontrivial to obtain because it requires knowing the state transition probability matrix . Fortunately, we do not need to calculate the specific value of to minimize this objective function as shown in the next subsection. If the state space is continuous, we can replace the summations with integrals in the above equation.

Stationary distribution

Once a policy is given, the MDP becomes a Markov process, and its dynamics are governed by the probability transition matrix .

Let be a vector representing the probability distribution of the states at the initial time step. The probability distribution vector after exactly steps, denoted as , can be formulated in matrix-vector form as:

here, represents the probability matrix of the agent transitioning from state to after exactly steps under policy .

When analyzing the long-term behavior of the Markov process, it holds under certain conditions that the matrix converges as approaches infinity:

Here, , which means is a constant matrix where all of its rows are equal to . Substituting this limit back into the state evolution equation yields:

This mathematical derivation is valid because the sum of the initial probabilities equals one (). This Equation demonstrates that the state distribution converges to a constant vector , defined as the limiting distribution. Notably, this long-term probability distribution is independent of the initial distribution .

The analytical value of can be calculated by leveraging the recursive nature of the transition process. By taking the limit of both sides of the iterative equation , we obtain:

Since both distributions converge as , this directly resolves to the stationary equation:

In linear algebra, the left eigenvector and its corresponding eigenvalue of a matrix satisfy the definition .

Comparing this to the steady-state equation , it is clear that is precisely the left eigenvector of the probability transition matrix corresponding to the eigenvalue . when transforming the formula to , we find the corresponding eigenvector with an eigenvalue of 1 by solving this homogeneous linear system of equations.

Optimization

We can adopt the gradient descent method to optimaize the objective:

where

Therefore, the gradient descent algorithm is

where the coefficient before can be merged into without loss of generality. The algorithm requires calculating the expectation. In the spirit of stochastic gradient descent (SGD), we can replace the true gradient with a stochastic gradient. Then, we have

where is a sample of at time . It requires the true state value , which is unknown and must be estimated. We can replace with an approximation to make the algorithm implementable.

The following two methods can be used to do so.

- Monte Carlo method: Suppose that we have an episode . Let be the discounted return starting from . Then, can be used as an approximation of . The algorithm in (8.12) becomes:

This is the algorithm of Monte Carlo learning with function approximation.

- Temporal-difference method: In the spirit of TD learning, can be used as an approximation of . The algorithm becomes

This is the algorithm of TD learning with function approximation.

We can use the function introduce in Function-based Value representation to represent which function is we use to approximate the true value function. If we use linear approximation function, the gradient is . We can also use neural network.

Theoretical analysis

For the convergence, see at book.

The true state-value function strictly satisfies the Bellman equation: . When we introduce a function approximator (e.g., a linear function or neural network with parameters ), we hope that the estimated value also satisfies this equation as much as possible. Therefore, the difference between the two sides of the equation is the Bellman error:

The Bellman error often cannot be optimized to 0 because the function approximators we use (such as low-dimensional linear features) have limited approximation ability, the result mapped by the Bellman operator often "runs out" of the space that our function approximator can represent.

That error leads to that the truly optimized objective is not the objective we talk in previous section but the objective called the projected Bellman error.

here, is an orthogonal projection matrix. Its function is to geometrically project any vector back into the space that the approximator can represent. Since itself is in the approximation space, and the target is also projected into this space, there must exist a parameter that can reduce the projection error to 0. In fact, the solution converged by the classic TD Learning algorithm is precisely the solution that minimizes the projection Bellman error.

TD learning of action values based on function approximation

Here we use function to approximate action values.

The Sarsa algorithm with function approximation can be readily obtained by replacing the state values with action values in TD learning of state values based on function approximation section. In particular, suppose that is approximated by . Replacing by gives:

Tabular Q-learning can also be extended to the case of function approximation. The update rule is

Deep Q-learning

Mathematically, deep Q-learning aims to minimize the following objective function:

where are random variables that denote a state, an action, the immediate reward, and the next state, respectively. This objective function can be viewed as the squared Bellman optimality error. That is because is the Bellman optimality equation. Therefore, should equal zero in the expectation sense when can accurately approximate the optimal action values. This means that DQN aligns with Q-learning mathematicaly.

To minimize this objective function, we can also use the gradient descent algorithm. To that end, we need to calculate the gradient of with respect to . It is noted that the parameter appears not only in but also in . As a result, it is nontrivial to calculate the gradient. For the sake of simplicity, it is assumed that the value of in is fixed (for a short period of time) so that the calculation of the gradient becomes much easier. In particular, we introduce two networks:

- Main Network: represent , namely the approximation of action value.

- Target network : represent , namely the approximation of our target action value (optimal action value).

The objective function in this case becomes:

where is the target network's parameter. When is fixed, the gradient of is

where some constant coefficients are omitted without loss of generality.

The main network is updated in every iteration. By contrast, the target network is set to be the same as the main network every certain number of iterations to satisfy the assumption that is fixed when calculating the gradient.

As we introduce the deep neural network here, previous incremental updates based on temporal sequence is no longer suitable anymore (we will introduce reasons later). Thus, we need to introduce one technique called experience reply. That is, after we have collected some experience samples, we do not use these samples in the order they were collected. Instead, we store them in a dataset called the replay buffer. In particular, let be an experience sample and be the replay buffer. Every time we update the main network, we can draw a mini-batch of experience samples from the replay buffer.

Why is experience replay necessary in deep Q-learning, and why must the replay follow a uniform distribution? The answer lies in the objective function of DQN. In particular, to well define the objective function, we must specify the probability distributions for . The distributions of and are determined by the system model once is given. The simplest way to describe the distribution of the state-action pair is to assume it to be uniformly distributed. However, the state-action samples may not be uniformly distributed in practice since they are generated as a sample sequence according to the behavior policy. It is necessary to break the correlation between the samples in the sequence to satisfy the assumption of uniform distribution. To do this, we can use the experience replay technique by uniformly drawing samples from the replay buffer. This is the mathematical reason why experience replay is necessary and why experience replay must follow a uniform distribution.

The reason why previous Sarsa and Q-learning (becomes DQN) do not adopt the experience reply is that we assume we use the linear approximation function. It can be proved that when adotping linear approximation is convergent when the State follows the stationary distribution we talked before. In this circumstance, when the algorithm collects and updates data online in the order of time steps , these samples naturally follow a stationary distribution of the current behavior strategy. Therefore, in the mathematical derivation, the algorithm directly uses the sample sequence naturally generated by the Markov chain to approximate the expectation, without needing to construct an additional distribution.

However, when with deep neural network, both Sarsa and Q-learning should follow experience reply like DQN (Deep Sarsa is hard to implement as it is on-policy, which is contradictory with experience replay, as Sarsa's updates rely on the formula , where must be the actual sampled value at from the current latest policy. If you store data from hundreds of thousands of steps ago in a global replay buffer and then extract it for training, that action will no longer be the action the current policy would take. Using a replay buffer directly undermines Sarsa's on-policy theoretical foundation, causing the algorithm to evaluate something other than the current policy.).